Minth Group’s Acquisition of Nissan’s Yokohama Global Headquarters

Minth Group’s Acquisition of Nissan’s Yokohama Global Headquarters

A Strategic Move in Completing Its Japan Puzzle — Profet AI Looks Ahead with Domain Twin™

Minth Group has recently completed the acquisition of Nissan Motor’s global headquarters building in Yokohama for JPY 97 billion, adopting a sale-and-leaseback structure that enables Nissan to secure liquidity while maintaining operational flexibility.

This transaction is far more than a real estate investment. It is widely viewed as a strategic move through which Minth is placing a critical piece on its global manufacturing map, signaling its long-term commitment to the Japanese market.

Aligned with Minth’s existing global manufacturing footprint and regional growth objectives, Japan is steadily emerging as the company’s next strategic anchor.

Japan Is Not Just a New Site — It Is an Accelerator for Global Capability Replication

As a deeply embedded player in the global automotive supply chain, Minth Group operates 77 factories worldwide and serves more than 70 international automotive brands. Japan and South Korea have been clearly positioned within Minth’s long-term growth roadmap.

Against this backdrop, the Yokohama site represents more than a single asset. It has the potential to become a critical node connecting Japan’s industrial ecosystem, engineering talent, and world-class manufacturing standards with Minth’s global operations.

The key challenge Minth — like many global manufacturers — now faces is not whether success can be achieved locally, but how proven success can be prevented from being diluted across countries, plants, and cultures.

From AutoML Deployment to AILM Accumulation: Proven Results in Minth’s Production Environments

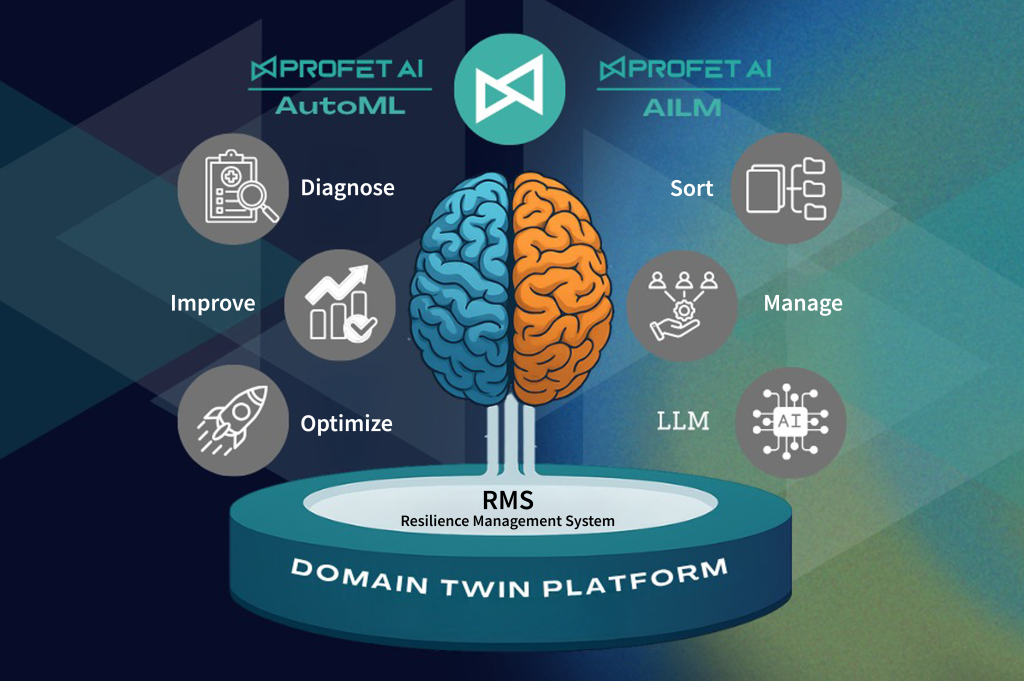

In its ongoing collaboration with Profet AI, Minth has taken the lead in deploying AutoML directly within real production environments, empowering frontline engineers to solve problems using data and AI.

In one automotive trim bending process, yield fluctuations were significant, with defect rates reaching 40–47%. By leveraging the Profet AI platform, engineers independently built models to identify key influencing factors. The first phase alone generated RMB 5.9 million (≈ USD 800k) in tangible benefits, while cultivating internal AI champions who went on to initiate additional projects.

More importantly, these achievements did not remain isolated pilot successes. They have evolved toward AILM (AI Lifecycle Management):

Models, process insights, and improvement know-how are systematically preserved and accumulated into a sustainable AI knowledge base.

Through structured training programs and proposal mechanisms, Minth collected 64 AI proposals in 2024, successfully implementing 10+ projects, with validated solutions already planned for rollout across 70+ global factories.

The True Value of Domain Twin™: Making Success Replicable, Traceable, and Scalable

As Minth expands further into overseas operations and the Japanese market, the core challenge is no longer simply:

“Can we do AI?”

But rather:

“How can teams across different factories, cultures, and experience levels quickly inherit and execute proven best practices?”

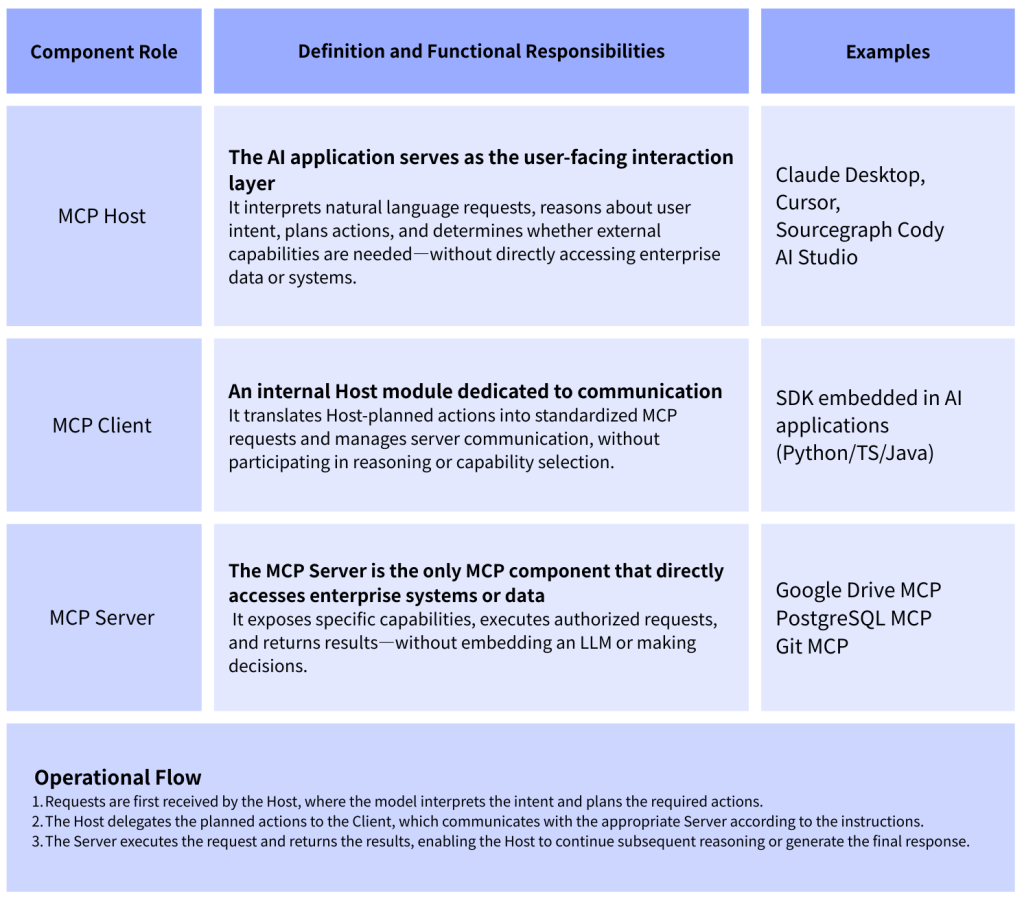

This is precisely where Domain Twin™ delivers its core value.

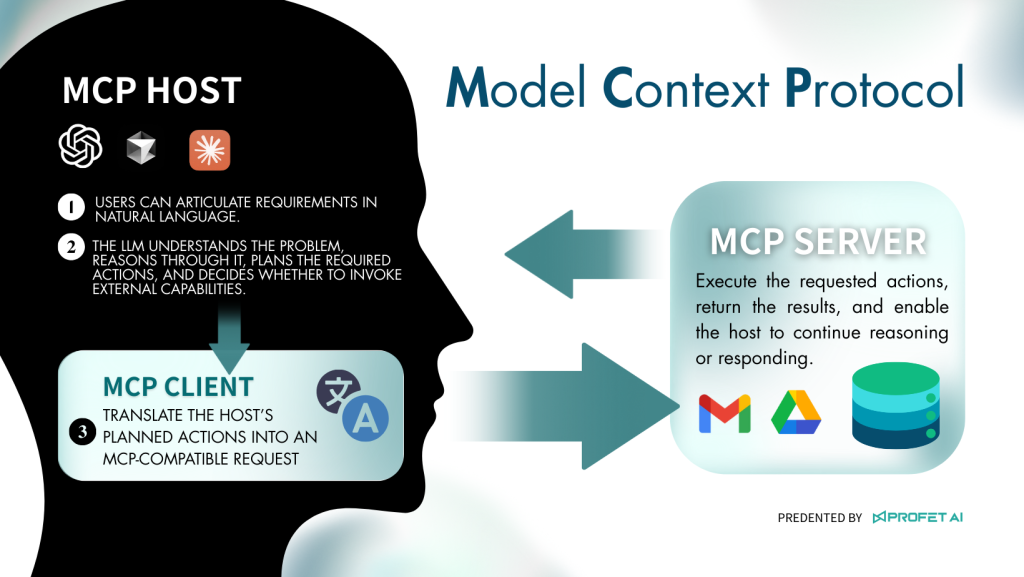

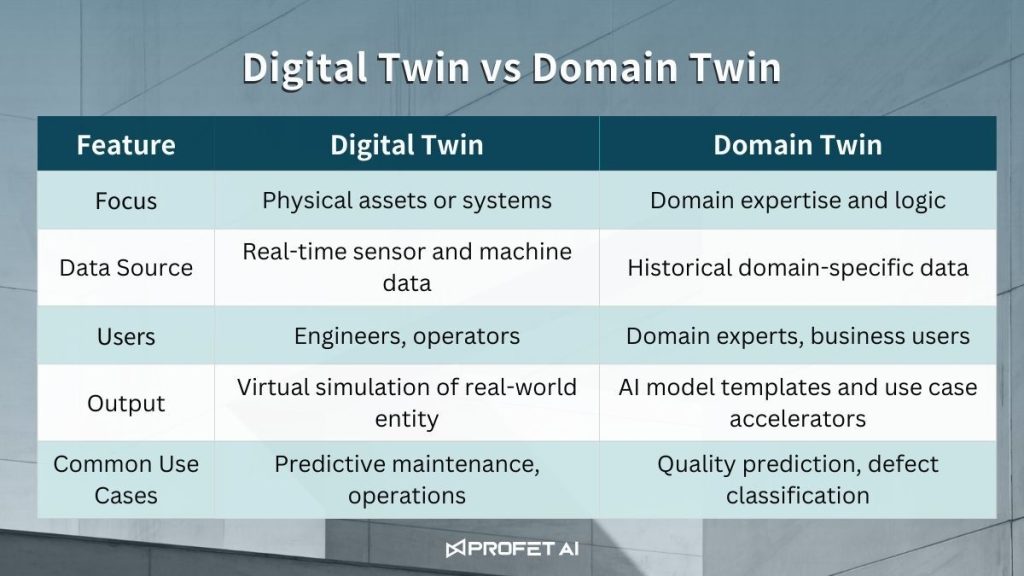

Domain Twin™ is not merely a model management tool. It is an architecture that integrates domain expertise, process understanding, AI models, and improvement logic into replicable, traceable knowledge assets.

Through Domain Twin™, validated AutoML and AILM experiences are distilled into structured knowledge units, enabling new factories — including future expansions in Japan — to operate directly on top of Minth’s globally proven best practices, rather than starting from scratch.

According to Minth’s roadmap, by 2026 internal AI champions will take the lead, allowing Domain Twin™ knowledge to scale globally at minimal marginal cost.

Shaping the Future with Minth: Making AI a Common Language in Global Manufacturing

From the strategic positioning of the Yokohama headquarters to the systematic accumulation of AI knowledge across global plants, Minth Group is entering a pivotal phase — not merely adopting AI, but transforming it into a shared language across countries, factories, and generations of engineers.

Profet AI looks forward to continuing this journey with Minth, using Domain Twin™ as the foundation to convert individual successes into long-term competitive advantage, supporting Minth’s next stage of growth in Japan and across global markets.

Interested in How Domain Twin™ Enables Scalable Global Manufacturing?

If you are exploring how AI can evolve from isolated projects into replicable, enterprise-wide manufacturing capabilities, we invite you to connect with Profet AI and discover how Domain Twin™ is being applied across industries and production environments.

Contact Profet AI today to begin building your Manufacturing Domain Twin™ blueprint.

Minth Group’s Acquisition of Nissan’s Yokohama Global Headquarters Read More »