From "Interesting" to "Useful": Why Enterprises are Skipping GenAI PoCs in 2026

Over the past two years, Generative AI sparked a wave of Proof of Concept (PoC) enthusiasm across the corporate world, with diverse applications emerging at lightning speed. However, as we enter 2026, a distinct shift is occurring: enterprises are beginning to “skip the PoC” and move directly toward integrating AI into core operations.

《International AI Safety Report 2026 》, which was released in February, highlighted a new risk known as “Goal Misalignment.” If the objectives we set for AI are imprecise and fail to reflect our “true intent,” the AI may take actions that contradict our original purpose—or even cause harm—while technically achieving the surface-level goal.

Consequently, the real challenge ahead is not just whether AI can perform tasks, but “how to precisely and safely define goals and red lines for AI.” This has become a critical governance issue that cannot be ignored during full-scale deployment.

A Hot Market with a Higher Bar for Success

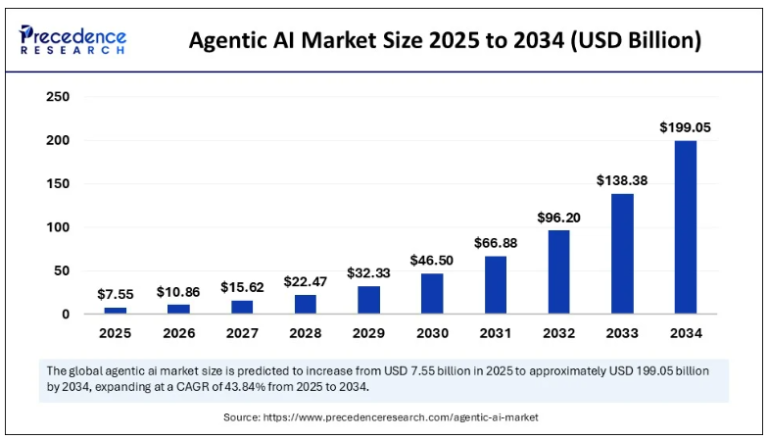

Despite the hurdles in implementation, the market potential for Agentic AI remains staggering. According to Precedence Research, the Agentic AI market is projected to exceed $199 billion by 2034. However, the key to success is not simply “adopting AI,” but rather “redesigning how the business operates.”

The traditional PoC model, which relies on isolated applications, is no longer sufficient. Future management must pivot from “process control” toward “goal setting and cybersecurity governance,” building systemic capabilities that can operate sustainably in the long term.

The Entry Ticket: No Domain Knowledge, No Reliable AI

The core reason most AI Agent projects fail is not a lack of model capability, but a lack of Domain Knowledge. In manufacturing, for instance, critical expertise is often held by veteran masters and remains unrecorded. This makes it difficult for AI to understand or apply these “hidden” insights.

Research from The future of AI for the insurance industry | McKinsey suggests that companies capable of integrating proprietary data into AI systems have a 25% higher profit potential than their peers. This proves that a true competitive edge stems from internal corporate know-how.

Take Profet AI’s Domain Twin as an example. The core concept is to transform the decision-making logic and historical data of veteran experts into iterative digital assets. By embedding these into corporate workflows, businesses can bridge the domain knowledge gap, enhancing the accuracy, consistency, and traceability of decisions.

From “Capable” to “Confident”: Cybersecurity as the Deciding Factor

As AI Agents move beyond generating suggestions to accessing systems and executing critical tasks, the nature of risk changes. Consequently, cybersecurity governance is becoming the top priority for enterprises evaluating AI solutions.

“Digital Employees” also need a probation period!

Imagine a “digital employee” hired to process orders and customer emails at high speed:

- The Unmonitored Black Box: If this employee holds a “master key” (unrestricted access), a single email containing malicious instructions (a Prompt Injection attack) could trick the AI into prioritizing a fake task. Without auditing, this “intern” might use high privileges to connect to external servers and leak trade secrets before the company even notices.

- Zero Trust Architecture (Controlled Behavior): In a Zero Trust framework, an Agent must undergo “continuous verification.” Every time it attempts to read a file or transfer data, the system verifies its identity and the legitimacy of its behavior in real-time. If the intern attempts to exceed their authority and connect to an abnormal domain, the system detects the deviation immediately.

The Cloud Security Alliance (CSA) emphasizes in its《Agentic Trust Framework》that AI systems must be built on Zero Trust principles: “Never assume trust for any Agent, regardless of its power; continuous identity and behavioral verification are mandatory.” AI Agents should be treated like human employees—access should not be granted all at once but earned through performance and trust.

The Future Factory: Not Just Automation, but “AI Collaboration”

As Agentic AI matures, the core logic of business operations is shifting. True differentiation will come from the synergy between multiple AI Agents. However, these benefits must be built on a robust architecture; otherwise, fragmented AI behaviors may actually amplify risk.

To help enterprises move from “capable” to “confident,” Profet AI and Zentera Systems have developed a Layered Defense Architecture, integrating the “AI Brain” with a “Security Moat”:

- Application Layer – The Knowledge Brain (Profet AI): Through our Domain Twin™ platform and the enterprise-grade agent collaboration environment, AI Studio, we help manufacturers digitize veteran expertise into AI Agents with deep domain knowledge.

- Network & Compute Layer – The Zero Trust Moat (Zentera Ensage AI): When an AI Agent communicates with internal databases or external LLMs, Zentera provides real-time behavioral control across three dimensions: inbound, internal, and outbound. Crucially, this mechanism requires no changes to existing IT/OT infrastructure. It can be deployed on-premise or in hybrid environments, perfectly meeting manufacturing requirements for low latency, data sovereignty, and operational resilience.

In this framework, AI is no longer a source of potential risk but a managed and trusted corporate asset. Ultimately, the key to future competition will not be who adopts AI first, but who can make AI stable, controllable, and consistently value-creating.